Matrici of Matrici

Charles Hermite

Charles Hermite | |

|---|---|

Charles Hermite | |

| Born | 24 December 1822 |

| Died | 14 January 1901 (aged 78) |

| Nationality | French |

| Alma mater | Collège Henri IV, Sorbonne Collège Louis-le-Grand, Sorbonne |

| Known for | Proof that e is transcendental Hermitian adjoint Hermitian form Hermitian function Hermitian matrix Hermitian metric Hermitian operator Hermite polynomials Hermitian transpose Hermitian wavelet |

| Scientific career | |

| Fields | Mathematics |

| Institutions | |

| Doctoral advisor | Eugène Charles Catalan |

| Doctoral students | Léon Charve Henri Padé Mihailo Petrović Henri Poincaré Thomas Stieltjes Jules Tannery |

Charles Hermite (French pronunciation: [ʃaʁl ɛʁˈmit]) FRS FRSE MIAS (24 December 1822 – 14 January 1901) was a French mathematician who did research concerning number theory, quadratic forms, invariant theory, orthogonal polynomials, elliptic functions, and algebra.

Hermite polynomials, Hermite interpolation, Hermite normal form, Hermitian operators, and cubic Hermite splines are named in his honor. One of his students was Henri Poincaré.

He was the first to prove that e, the base of natural logarithms, is a transcendental number. His methods were used later by Ferdinand von Lindemann to prove that π is transcendental.

Life[edit]

Hermite was born in Dieuze, Moselle, on 24 December 1822,[1] with a deformity in his right foot that would impair his gait throughout his life. He was the sixth of seven children of Ferdinand Hermite and his wife, Madeleine née Lallemand. Ferdinand worked in the drapery business of Madeleine's family while also pursuing a career as an artist. The drapery business relocated to Nancy in 1828, and so did the family.[2]

Hermite obtained his secondary education at Collège de Nancy and then, in Paris, at Collège Henri IV and at the Lycée Louis-le-Grand.[1] He read some of Joseph-Louis Lagrange's writings on the solution of numerical equations and Carl Friedrich Gauss's publications on number theory.

Hermite wanted to take his higher education at École Polytechnique, a military academy renowned for excellence in mathematics, science, and engineering. Tutored by mathematician Eugène Charles Catalan, Hermite devoted a year to preparing for the notoriously difficult entrance examination.[2] In 1842 he was admitted to the school.[1] However, after one year the school would not allow Hermite to continue his studies there because of his deformed foot. He struggled to regain his admission to the school, but the administration imposed strict conditions. Hermite did not accept this, and he quit the École Polytechnique without graduating.[2]

In 1842, Nouvelles Annales de Mathématiques published Hermite's first original contribution to mathematics, a simple proof of Niels Abel's proposition concerning the impossibility of an algebraic solution to equations of the fifth degree.[1]

A correspondence with Carl Jacobi, begun in 1843 and continued the next year, resulted in the insertion, in the complete edition of Jacobi's works, of two articles by Hermite, one concerning the extension to Abelian functions of one of the theorems of Abel on elliptic functions, and the other concerning the transformation of elliptic functions.[1]

After spending five years working privately towards his degree, in which he befriended eminent mathematicians Joseph Bertrand, Carl Gustav Jacob Jacobi, and Joseph Liouville, he took and passed the examinations for the baccalauréat, which he was awarded in 1847. He married Joseph Bertrand's sister, Louise Bertrand, in 1848.[2]

In 1848, Hermite returned to the École Polytechnique as répétiteur and examinateur d'admission. In 1856 he contracted smallpox. Through the influence of Augustin-Louis Cauchy and of a nun who nursed him, he resumed the practice of his Catholic faith.[1] In July 1848, he was elected to the French Academy of Sciences. In 1869, he succeeded Jean-Marie Duhamel as professor of mathematics, both at the École Polytechnique, where he remained until 1876, and at the University of Paris, where he remained until his death. From 1862 to 1873 he was lecturer at the École Normale Supérieure. Upon his 70th birthday, he was promoted to grand officer in the French Legion of Honour.[1]

Hermite died in Paris on 14 January 1901,[1] aged 78.

Mathematical structure[edit]

It is assumed below that spacetime is endowed with a coordinate system corresponding to an inertial frame. This provides an origin, which is necessary in order to be able to refer to spacetime as being modeled as a vector space. This is not really physically motivated in that a canonical origin ("central" event in spacetime) should exist. One can get away with less structure, that of an affine space, but this would needlessly complicate the discussion and would not reflect how flat spacetime is normally treated mathematically in modern introductory literature.

For an overview, Minkowski space is a 4-dimensional real vector space equipped with a nondegenerate, symmetric bilinear form on the tangent space at each point in spacetime, here simply called the Minkowski inner product, with metric signature either (+ − − −) or (− + + +). The tangent space at each event is a vector space of the same dimension as spacetime, 4.

Tangent vectors[edit]

In practice, one need not be concerned with the tangent spaces. The vector space nature of Minkowski space allows for the canonical identification of vectors in tangent spaces at points (events) with vectors (points, events) in Minkowski space itself. See e.g. Lee (2003, Proposition 3.8.) or Lee (2012, Proposition 3.13.) These identifications are routinely done in mathematics. They can be expressed formally in Cartesian coordinates as[12]

Here p and q are any two events and the second basis vector identification is referred to as parallel transport. The first identification is the canonical identification of vectors in the tangent space at any point with vectors in the space itself. The appearance of basis vectors in tangent spaces as first order differential operators is due to this identification. It is motivated by the observation that a geometrical tangent vector can be associated in a one-to-one manner with a directional derivative operator on the set of smooth functions. This is promoted to a definition of tangent vectors in manifolds not necessarily being embedded in Rn. This definition of tangent vectors is not the only possible one as ordinary n-tuples can be used as well.

For some purposes it is desirable to identify tangent vectors at a point p with displacement vectors at p, which is, of course, admissible by essentially the same canonical identification.[13] The identifications of vectors referred to above in the mathematical setting can correspondingly be found in a more physical and explicitly geometrical setting in Misner, Thorne & Wheeler (1973). They offer various degree of sophistication (and rigor) depending on which part of the material one chooses to read.

Minkowski space

This article's tone or style may not reflect the encyclopedic tone used on Wikipedia. (January 2020) |

In mathematical physics, Minkowski space (or Minkowski spacetime) (/mɪŋˈkɔːfski, -ˈkɒf-/[1]) is a combination of three-dimensional Euclidean space and time into a four-dimensional manifold where the spacetime interval between any two events is independent of the inertial frame of reference in which they are recorded. Although initially developed by mathematician Hermann Minkowski for Maxwell's equations of electromagnetism, the mathematical structure of Minkowski spacetime was shown to be implied by the postulates of special relativity.[2]

Minkowski space is closely associated with Einstein's theories of special relativity and general relativity and is the most common mathematical structure on which special relativity is formulated. While the individual components in Euclidean space and time may differ due to length contraction and time dilation, in Minkowski spacetime, all frames of reference will agree on the total distance in spacetime between events.[nb 1] Because it treats time differently than it treats the 3 spatial dimensions, Minkowski space differs from four-dimensional Euclidean space.

In 3-dimensional Euclidean space (e.g., simply space in Galilean relativity), the isometry group (the maps preserving the regular Euclidean distance) is the Euclidean group. It is generated by rotations, reflections and translations. When time is appended as a fourth dimension, the further transformations of translations in time and Galilean boosts are added, and the group of all these transformations is called the Galilean group. All Galilean transformations preserve the 3-dimensional Euclidean distance. This distance is purely spatial. Time differences are separately preserved as well. This changes in the spacetime of special relativity, where space and time are interwoven.

Spacetime is equipped with an indefinite non-degenerate bilinear form, variously called the Minkowski metric,[3] the Minkowski norm squared or Minkowski inner product depending on the context.[nb 2] The Minkowski inner product is defined so as to yield the spacetime interval between two events when given their coordinate difference vector as argument.[4] Equipped with this inner product, the mathematical model of spacetime is called Minkowski space. The analogue of the Galilean group for Minkowski space, preserving the spacetime interval (as opposed to the spatial Euclidean distance) is the Poincaré group.

As manifolds, Galilean spacetime and Minkowski spacetime are the same. They differ in what further structures are defined on them. The former has the Euclidean distance function and time interval (separately) together with inertial frames whose coordinates are related by Galilean transformations, while the latter has the Minkowski metric together with inertial frames whose coordinates are related by Poincaré transformations.

Linear involutions[edit]

To give a linear involution is the same as giving an involutory matrix, a square matrix A such that

where I is the identity matrix.

It is a quick check that a square matrix D whose elements are all zero off the main diagonal and ±1 on the diagonal, that is, a signature matrix of the form

satisfies (1), i.e. is the matrix of a linear involution. It turns out that all the matrices satisfying (1) are of the form

- A=U −1DU,

where U is invertible and D is as above. That is to say, the matrix of any linear involution is of the form D up to a matrix similarity. Geometrically this means that any linear involution can be obtained by taking oblique reflections against any number from 0 through n hyperplanes going through the origin. (The term oblique reflection as used here includes ordinary reflections.)

One can easily verify that A represents a linear involution if and only if A has the form

- A = ±(2P - I)

for a linear projection P.

Affine involutions[edit]

If A represents a linear involution, then x→A(x−b)+b is an affine involution. One can check that any affine involution in fact has this form. Geometrically this means that any affine involution can be obtained by taking oblique reflections against any number from 0 through n hyperplanes going through a point b.

Affine involutions can be categorized by the dimension of the affine space of fixed points; this corresponds to the number of values 1 on the diagonal of the similar matrix D (see above), i.e., the dimension of the eigenspace for eigenvalue 1.

The affine involutions in 3D are:

- the identity

- the oblique reflection in respect to a plane

- the oblique reflection in respect to a line

- the reflection in respect to a point.[2]

Isometric involutions[edit]

In the case that the eigenspace for eigenvalue 1 is the orthogonal complement of that for eigenvalue −1, i.e., every eigenvector with eigenvalue 1 is orthogonal to every eigenvector with eigenvalue −1, such an affine involution is an isometry. The two extreme cases for which this always applies are the identity function and inversion in a point.

The other involutive isometries are inversion in a line (in 2D, 3D, and up; this is in 2D a reflection, and in 3D a rotation about the line by 180°), inversion in a plane (in 3D and up; in 3D this is a reflection in a plane), inversion in a 3D space (in 3D: the identity), etc.

Symmetric matrix

In linear algebra, a symmetric matrix is a square matrix that is equal to its transpose. Formally,

Because equal matrices have equal dimensions, only square matrices can be symmetric.

The entries of a symmetric matrix are symmetric with respect to the main diagonal. So if denotes the entry in the th row and th column then

for all indices and

Every square diagonal matrix is symmetric, since all off-diagonal elements are zero. Similarly in characteristic different from 2, each diagonal element of a skew-symmetric matrix must be zero, since each is its own negative.

In linear algebra, a real symmetric matrix represents a self-adjoint operator[1] represented in an orthonormal basis over a real inner product space. The corresponding object for a complex inner product space is a Hermitian matrix with complex-valued entries, which is equal to its conjugate transpose. Therefore, in linear algebra over the complex numbers, it is often assumed that a symmetric matrix refers to one which has real-valued entries. Symmetric matrices appear naturally in a variety of applications, and typical numerical linear algebra software makes special accommodations for them.

Raising and lowering of indices[edit]

Technically, a non-degenerate bilinear form provides a map between a vector space and its dual; in this context, the map is between the tangent spaces of M and the cotangent spaces of M. At a point in M, the tangent and cotangent spaces are dual vector spaces (so the dimension of the cotangent space at an event is also 4). Just as an authentic inner product on a vector space with one argument fixed, by Riesz representation theorem, may be expressed as the action of a linear functional on the vector space, the same holds for the Minkowski inner product of Minkowski space.[19]

Thus if vμ are the components of a vector in a tangent space, then ημν vμ = vν are the components of a vector in the cotangent space (a linear functional). Due to the identification of vectors in tangent spaces with vectors in M itself, this is mostly ignored, and vectors with lower indices are referred to as covariant vectors. In this latter interpretation, the covariant vectors are (almost always implicitly) identified with vectors (linear functionals) in the dual of Minkowski space. The ones with upper indices are contravariant vectors. In the same fashion, the inverse of the map from tangent to cotangent spaces, explicitly given by the inverse of η in matrix representation, can be used to define raising of an index. The components of this inverse are denoted ημν. It happens that ημν = ημν. These maps between a vector space and its dual can be denoted η♭ (eta-flat) and η♯ (eta-sharp) by the musical analogy.[20]

Contravariant and covariant vectors are geometrically very different objects. The first can and should be thought of as arrows. A linear functional can be characterized by two objects: its kernel, which is a hyperplane passing through the origin, and its norm. Geometrically thus, covariant vectors should be viewed as a set of hyperplanes, with spacing depending on the norm (bigger = smaller spacing), with one of them (the kernel) passing through the origin. The mathematical term for a covariant vector is 1-covector or 1-form (though the latter is usually reserved for covector fields).

Misner, Thorne & Wheeler (1973) uses a vivid analogy with wave fronts of a de Broglie wave (scaled by a factor of Planck's reduced constant) quantum mechanically associated to a momentum four-vector to illustrate how one could imagine a covariant version of a contravariant vector. The inner product of two contravariant vectors could equally well be thought of as the action of the covariant version of one of them on the contravariant version of the other. The inner product is then how many time the arrow pierces the planes. The mathematical reference, Lee (2003), offers the same geometrical view of these objects (but mentions no piercing).

The electromagnetic field tensor is a differential 2-form, which geometrical description can as well be found in MTW.

One may, of course, ignore geometrical views all together (as is the style in e.g. Weinberg (2002) and Landau & Lifshitz 2002) and proceed algebraically in a purely formal fashion. The time-proven robustness of the formalism itself, sometimes referred to as index gymnastics, ensures that moving vectors around and changing from contravariant to covariant vectors and vice versa (as well as higher order tensors) is mathematically sound. Incorrect expressions tend to reveal themselves quickly.

Metric space

(Redirected from Quasimetric space)In mathematics, a metric space is a set together with a notion of distance between its elements, usually called points. The distance is measured by a function called a metric or distance function.[1] Metric spaces are the most general setting for studying many of the concepts of mathematical analysis and geometry.

The most familiar example of a metric space is 3-dimensional Euclidean space with its usual notion of distance. Other well-known examples are a sphere equipped with the angular distance and the hyperbolic plane. A metric may correspond to a metaphorical, rather than physical, notion of distance: for example, the set of 100-character Unicode strings can be equipped with the Hamming distance, which measures the number of characters that need to be changed to get from one string to another.

Since they are very general, metric spaces are a tool used in many different branches of mathematics. Many types of mathematical objects have a natural notion of distance and therefore admit the structure of a metric space, including Riemannian manifolds, normed vector spaces, and graphs. In abstract algebra, the p-adic numbers arise as elements of the completion of a metric structure on the rational numbers. Metric spaces are also studied in their own right in metric geometry[2] and analysis on metric spaces.[3]

Many of the basic notions of mathematical analysis, including balls, completeness, as well as uniform, Lipschitz, and Hölder continuity, can be defined in the setting of metric spaces. Other notions, such as continuity, compactness, and open and closed sets, can be defined for metric spaces, but also in the even more general setting of topological spaces.

In mathematics, a metric space is a set together with a notion of distance between its elements, usually called points. The distance is measured by a function called a metric or distance function.[1] Metric spaces are the most general setting for studying many of the concepts of mathematical analysis and geometry.

The most familiar example of a metric space is 3-dimensional Euclidean space with its usual notion of distance. Other well-known examples are a sphere equipped with the angular distance and the hyperbolic plane. A metric may correspond to a metaphorical, rather than physical, notion of distance: for example, the set of 100-character Unicode strings can be equipped with the Hamming distance, which measures the number of characters that need to be changed to get from one string to another.

Since they are very general, metric spaces are a tool used in many different branches of mathematics. Many types of mathematical objects have a natural notion of distance and therefore admit the structure of a metric space, including Riemannian manifolds, normed vector spaces, and graphs. In abstract algebra, the p-adic numbers arise as elements of the completion of a metric structure on the rational numbers. Metric spaces are also studied in their own right in metric geometry[2] and analysis on metric spaces.[3]

Many of the basic notions of mathematical analysis, including balls, completeness, as well as uniform, Lipschitz, and Hölder continuity, can be defined in the setting of metric spaces. Other notions, such as continuity, compactness, and open and closed sets, can be defined for metric spaces, but also in the even more general setting of topological spaces.

Contents

Functions between metric spaces[edit]

Unlike in the case of topological spaces or algebraic structures such as groups or rings, there is no single "right" type of structure-preserving function between metric spaces. Instead, one works with different types of functions depending on one's goals. Throughout this section, suppose that and are two metric spaces. The words "function" and "map" are used interchangeably.

For in Stance

When word is associated with color

there is no infinite regress possible

Quasi-isometry

In mathematics, a quasi-isometry is a function between two metric spaces that respects large-scale geometry of these spaces and ignores their small-scale details. Two metric spaces are quasi-isometric if there exists a quasi-isometry between them. The property of being quasi-isometric behaves like an equivalence relation on the class of metric spaces.

The concept of quasi-isometry is especially important in geometric group theory, following the work of Gromov.[1]

In mathematics, a quasi-isometry is a function between two metric spaces that respects large-scale geometry of these spaces and ignores their small-scale details. Two metric spaces are quasi-isometric if there exists a quasi-isometry between them. The property of being quasi-isometric behaves like an equivalence relation on the class of metric spaces.

The concept of quasi-isometry is especially important in geometric group theory, following the work of Gromov.[1]

Contents

Definition[edit]

Suppose that is a (not necessarily continuous) function from one metric space to a second metric space . Then is called a quasi-isometry from to if there exist constants , , and such that the following two properties both hold:[2]

Suppose that is a (not necessarily continuous) function from one metric space to a second metric space . Then is called a quasi-isometry from to if there exist constants , , and such that the following two properties both hold:[2]

Isometries[edit]

One interpretation of a "structure-preserving" map is one that fully preserves the distance function:

- A function is distance-preserving[12] if for every pair of points x and y in M1,

It follows from the metric space axioms that a distance-preserving function is injective. A bijective distance-preserving function is called an isometry.[13] One perhaps non-obvious example of an isometry between spaces described in this article is the map defined by

If there is an isometry between the spaces M1 and M2, they are said to be isometric. Metric spaces that are isometric are essentially identical.

Definition and illustration[edit]

Motivation[edit]

To see the utility of different notions of distance, consider the surface of the Earth as a set of points. We can measure the distance between two such points by the length of the shortest path along the surface, "as the crow flies"; this is particularly useful for shipping and aviation. We can also measure the straight-line distance between two points through the Earth's interior; this notion is, for example, natural in seismology, since it roughly corresponds to the length of time it takes for seismic waves to travel between those two points.

The notion of distance encoded by the metric space axioms has relatively few requirements. This generality gives metric spaces a lot of flexibility. At the same time, the notion is strong enough to encode many intuitive facts about what distance means. This means that general results about metric spaces can be applied in many different contexts.

Like many fundamental mathematical concepts, the metric on a metric space can be interpreted in many different ways. A particular metric may not be best thought of as measuring physical distance, but the cost of changing from one state to another (as with Wasserstein metrics on spaces of measures) or the degree of difference between two objects (for example, the Hamming distance between two strings of characters, or the Gromov–Hausdorff distance between metric spaces themselves).

To see the utility of different notions of distance, consider the surface of the Earth as a set of points. We can measure the distance between two such points by the length of the shortest path along the surface, "as the crow flies"; this is particularly useful for shipping and aviation. We can also measure the straight-line distance between two points through the Earth's interior; this notion is, for example, natural in seismology, since it roughly corresponds to the length of time it takes for seismic waves to travel between those two points.

The notion of distance encoded by the metric space axioms has relatively few requirements. This generality gives metric spaces a lot of flexibility. At the same time, the notion is strong enough to encode many intuitive facts about what distance means. This means that general results about metric spaces can be applied in many different contexts.

Like many fundamental mathematical concepts, the metric on a metric space can be interpreted in many different ways. A particular metric may not be best thought of as measuring physical distance, but the cost of changing from one state to another (as with Wasserstein metrics on spaces of measures) or the degree of difference between two objects (for example, the Hamming distance between two strings of characters, or the Gromov–Hausdorff distance between metric spaces themselves).

Definition[edit]

Formally, a metric space is an ordered pair (M, d) where M is a set and d is a metric on M, i.e., a function

satisfying the following axioms for all points :[4][5]- The distance from a point to itself is zero:Intuitively, it never costs anything to travel from a point to itself.

- (Positivity) The distance between two distinct points is always positive:

- (Symmetry) The distance from x to y is always the same as the distance from y to x:This excludes asymmetric notions of "cost" which arise naturally from the observation that it's harder to walk uphill than downhill.

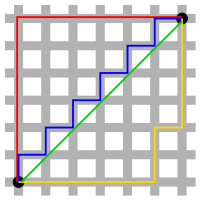

- The triangle inequality holds:This is a natural property of both physical and metaphorical notions of distance: you can arrive at z from x by taking a detour through y, but this will not make your journey any faster than the shortest path.

If the metric d is unambiguous, one often refers by abuse of notation to "the metric space M".

Formally, a metric space is an ordered pair (M, d) where M is a set and d is a metric on M, i.e., a function

- The distance from a point to itself is zero:Intuitively, it never costs anything to travel from a point to itself.

- (Positivity) The distance between two distinct points is always positive:

- (Symmetry) The distance from x to y is always the same as the distance from y to x:This excludes asymmetric notions of "cost" which arise naturally from the observation that it's harder to walk uphill than downhill.

- The triangle inequality holds:This is a natural property of both physical and metaphorical notions of distance: you can arrive at z from x by taking a detour through y, but this will not make your journey any faster than the shortest path.

If the metric d is unambiguous, one often refers by abuse of notation to "the metric space M".

Simple examples

Coordinate free raising and lowering

Pauli matrices

In mathematical physics and mathematics, the Pauli matrices are a set of three 2 × 2 complex matrices which are Hermitian, involutory and unitary.[1] Usually indicated by the Greek letter sigma (σ), they are occasionally denoted by tau (τ) when used in connection with isospin symmetries.

These matrices are named after the physicist Wolfgang Pauli. In quantum mechanics, they occur in the Pauli equation which takes into account the interaction of the spin of a particle with an external electromagnetic field. They also represent the interaction states of two polarization filters for horizontal/vertical polarization, 45 degree polarization (right/left), and circular polarization (right/left).

Each Pauli matrix is Hermitian, and together with the identity matrix I (sometimes considered as the zeroth Pauli matrix σ0), the Pauli matrices form a basis for the real vector space of 2 × 2 Hermitian matrices. This means that any 2 × 2 Hermitian matrix can be written in a unique way as a linear combination of Pauli matrices, with all coefficients being real numbers.

Hermitian operators represent observables in quantum mechanics, so the Pauli matrices span the space of observables of the complex 2-dimensional Hilbert space. In the context of Pauli's work, σk represents the observable corresponding to spin along the kth coordinate axis in three-dimensional Euclidean space

The Pauli matrices (after multiplication by i to make them anti-Hermitian) also generate transformations in the sense of Lie algebras: the matrices iσ1, iσ2, iσ3 form a basis for the real Lie algebra , which exponentiates to the special unitary group SU(2).[a] The algebra generated by the three matrices σ1, σ2, σ3 is isomorphic to the Clifford algebra of ,[2] and the (unital associative) algebra generated by iσ1, iσ2, iσ3 is effectively identical (isomorphic) to that of quaternions ().

Al

gebraic properties[edit]

All three of the Pauli matrices can be compacted into a single expression:

where the solution to i2 = -1 is the "imaginary unit", and δjk is the Kronecker delta, which equals +1 if j = k and 0 otherwise. This expression is useful for "selecting" any one of the matrices numerically by substituting values of j = 1, 2, 3, in turn useful when any of the matrices (but no particular one) is to be used in algebraic manipulations.

The matrices are involutory:

where I is the identity matrix.

The determinants and traces of the Pauli matrices are:

From which, we can deduce that each matrix σjk has eigenvalues +1 and −1.

With the inclusion of the identity matrix, I (sometimes denoted σ0 ), the Pauli matrices form an orthogonal basis (in the sense of Hilbert–Schmidt) of the Hilbert space of 2 × 2 Hermitian matrices, over , and the Hilbert space of all complex 2 × 2 matrices, .

Eigenvectors and eigenvalues[edit]

Each of the (Hermitian) Pauli matrices has two eigenvalues, +1 and −1. The corresponding normalized eigenvectors are:

Pauli vector[edit]

The Pauli vector is defined by[b]

The Pauli vector provides a mapping mechanism from a vector basis to a Pauli matrix basis[3] as follows,

More formally, this defines a map from to the vector space of traceless Hermitian matrices. This map encodes structures of as a normed vector space and as a Lie algebra (with the cross-product as its Lie bracket) via functions of matrices, making the map an isomorphism of Lie algebras. This makes the Pauli matrices intertwiners from the point of view of representation theory.

Another way to view the Pauli vector is as a Hermitian traceless matrix-valued dual vector, that is, an element of which maps .

Properties[edit]

For any unitary matrix U of finite size, the following hold:

- Given two complex vectors x and y, multiplication by U preserves their inner product; that is, ⟨Ux, Uy⟩ = ⟨x, y⟩.

- U is normal ().

- U is diagonalizable; that is, U is unitarily similar to a diagonal matrix, as a consequence of the spectral theorem. Thus, U has a decomposition of the form where V is unitary, and D is diagonal and unitary.

- .

- Its eigenspaces are orthogonal.

- U can be written as U = eiH, where e indicates the matrix exponential, i is the imaginary unit, and H is a Hermitian matrix.

For any nonnegative integer n, the set of all n × n unitary matrices with matrix multiplication forms a group, called the unitary group U(n).

Any square matrix with unit Euclidean norm is the average of two unitary matrices.[1]

Equivalent conditions[edit]

If U is a square, complex matrix, then the following conditions are equivalent:[2]

- is unitary.

- is unitary.

- is invertible with .

- The columns of form an orthonormal basis of with respect to the usual inner product. In other words, .

- The rows of form an orthonormal basis of with respect to the usual inner product. In other words, .

- is an isometry with respect to the usual norm. That is, for all , where .

- is a normal matrix (equivalently, there is an orthonormal basis formed by eigenvectors of ) with eigenvalues lying on the unit circle.

Elementary constructions[edit]

2 × 2 unitary matrix[edit]

The general expression of a 2 × 2 unitary matrix is

which depends on 4 real parameters (the phase of a, the phase of b, the relative magnitude between a and b, and the angle φ). The determinant of such a matrix is

The sub-group of those elements with is called the special unitary group SU(2).

The matrix U can also be written in this alternative form:

which, by introducing φ1 = ψ + Δ and φ2 = ψ − Δ, takes the following factorization:

This expression highlights the relation between 2 × 2 unitary matrices and 2 × 2 orthogonal matrices of angle θ.

Another factorization is[3]

Many other factorizations of a unitary matrix in basic matrices are possible.[4][5][6][7]

Unitary matrix

In linear algebra, a complex square matrix U is unitary if its conjugate transpose U* is also its inverse, that is, if

where I is the identity matrix.

In physics, especially in quantum mechanics, the conjugate transpose is referred to as the Hermitian adjoint of a matrix and is denoted by a dagger (†), so the equation above is written

The real analogue of a unitary matrix is an orthogonal matrix. Unitary matrices have significant importance in quantum mechanics because they preserve norms, and thus, probability amplitudes.

Involutory matrix

In mathematics, an involutory matrix is a square matrix that is its own inverse. That is, multiplication by the matrix A is an involution if and only if A2 = I, where I is the n × n identity matrix. Involutory matrices are all square roots of the identity matrix. This is simply a consequence of the fact that any nonsingular matrix multiplied by its inverse is the identity.[1]

The 2 × 2 real matrix is involutory provided that [2]

The Pauli matrices in M(2, C) are involutory:

One of the three classes of elementary matrix is involutory, namely the row-interchange elementary matrix. A special case of another class of elementary matrix, that which represents multiplication of a row or column by −1, is also involutory; it is in fact a trivial example of a signature matrix, all of which are involutory.

Some simple examples of involutory matrices are shown below.

where

- I is the 3 × 3 identity matrix (which is trivially involutory);

- R is the 3 × 3 identity matrix with a pair of interchanged rows;

- S is a signature matrix.

Any block-diagonal matrices constructed from involutory matrices will also be involutory, as a consequence of the linear independence of the blocks.

Symmetry[edit]

An involutory matrix which is also symmetric is an orthogonal matrix, and thus represents an isometry (a linear transformation which preserves Euclidean distance). Conversely every orthogonal involutory matrix is symmetric.[3] As a special case of this, every reflection and 180° rotation matrix is involutory.

Properties[edit]

An involution is non-defective, and each eigenvalue equals , so an involution diagonalizes to a signature matrix.

A normal involution is Hermitian (complex) or symmetric (real) and also unitary (complex) or orthogonal (real).

The determinant of an involutory matrix over any field is ±1.[4]

If A is an n × n matrix, then A is involutory if and only if P+ = (I + A)/2 is idempotent. This relation gives a bijection between involutory matrices and idempotent matrices.[4] Similarly, A is involutory if and only if P− = (I - A)/2 is idempotent. These two operators form the symmetric and antisymmetric projections of a vector with respect to the involution A, in the sense that , or . The same construct applies to any involutory function, such as the complex conjugate (real and imaginary parts), transpose (symmetric and antisymetric matrices), and Hermitian adjoint (Hermitian and skew-Hermitian matrices).

If A is an involutory matrix in M(n, R), a matrix algebra over the real numbers, then the subalgebra {x I + y A: x, y ∈ R} generated by A is isomorphic to the split-complex numbers.

If A and B are two involutory matrices which commute with each other (i.e. AB = BA) then AB is also involutory.

If A is an involutory matrix then every integer power of A is involutory. In fact, An will be equal to A if n is odd and I if n is even.

Comments

Post a Comment